SambaFlow developer documentation

SambaFlow™ developer documentation includes release notes, quickstart, tutorials, and the Python API reference.

What’s new

This release had a focus on performance improvements that are not directly customer visible. In addition, we made stability improvements to tensor parallel compilation and mixed precision.

-

How to use tensor parallel mode (Beta). Stability enhancements to tensor parallel compilation, which automates and simplifies compiling a model to run on multiple RDUs.

-

Compile with mixed precision (Beta). Stability enhancements to mixed precision, which combines the use of different numerical formats (such as FP32 and BF16) to reduce memory footprint and speed up large neural network workloads.

For additional information, see the SambaFlow Software Release Notes.

Concepts

-

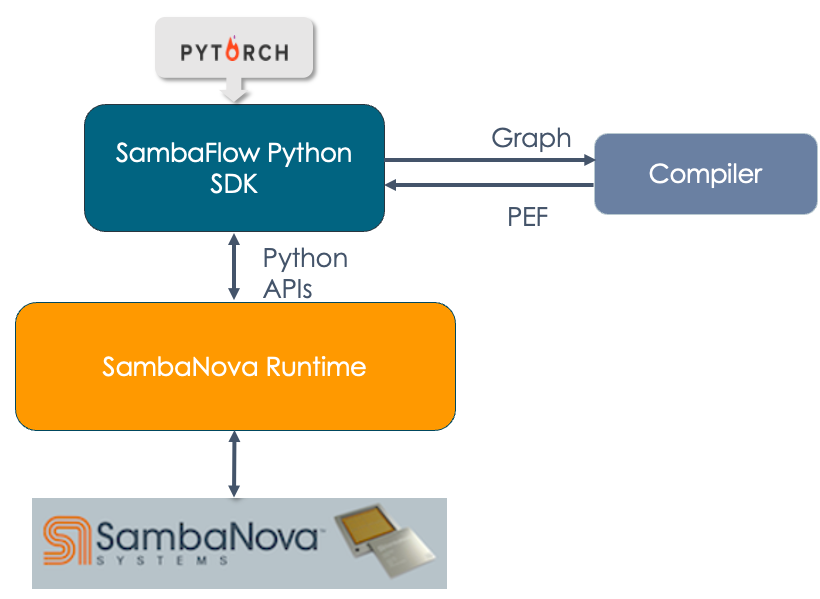

Architecture and workflows. Learn how SambaFlow fits into the SambaNova hardware and software stack, and about the typical compile and run workflow.

-

Models on RDU hardware. Understand the big picture: requirements and workflow for running on RDU.

-

Compilation overview. Explore the different layers of the compiler stack and explains what happens at each layer.

-

Compiler optimization modes. Learn about compiler optimization modes that give you control over operator fusion.

Tutorials

-

SambaFlow learning map. Overview of all tutorials and where to find instructions, tutorial files, and code discussion.

-

Hello SambaFlow! Learn how to compile and run your first model (duplicate of the README on GitHub) and explore the code discussion to help you create your own models.

-

Intermediate tutorial. Build on Hello SambaFlow! and learn about data download and running inference in Compilation, training, and inference (duplicate of the README on GitHub). The code discussion is in Examine LeNet model code.

-

Model conversion 101. Learn what’s required to run a PyTorch model on RDU from the detailed discussion of code examples in Convert existing models to SambaFlow.

-

Transformers on RDU. Use a pretrained Hugging Face model on RDU. The tutorial discusses data preparation, compile and training run, and running inference in Compile, train, and perform inference with a Hugging Face GPT model and discusses the model code in Code elements of the training program and Code elements of the inference program.

-

Troubleshooting SambaFlow Tutorials. Resolve issues with tutorial complation and training. We’re planning on updating this topic over time.

How-to guides

-

How to use data parallel mode. Learn about improving performance by compiling and running in data parallel mode.

-

How to use tensor parallel mode (Beta). Learn how tensor parallel mode enables compiling a model to run more efficently on RDUs.

-

Compile with mixed precision (Beta). Learn about compiling with mixed precision, which combines the use of different numerical formats (such as FP32 and BF16). Benefits include a reduced memory footprint and speed up of large neural network workloads.

-

Compose complex operations with parallel patterns. Learn how to use existing parallel pattern operators to create operators that aren’t currently supported on RDU.

-

Use multigraph to partition models. Learn how to use the multigraph feature when your model consists of separate PyTorch modules and you want to have fine-grained control on when to run them.

-

Use sntilestat for performance analysis. Learn how to use the

sntilestatutility interactively to learn where your model spends time, and how to use the .json and .csv files the tool generates.

Reference

-

SambaNova messages and logs. Understand which messages to stdout are useful and where different logs are kept.

-

Arguments for compile. Reference to commonly used compiler arguments. Includes descriptions and examples.

-

Operator fusion rule yaml syntax. Reference to the operator fusion rule yaml syntax. These yaml files are used in conjunction with o1 compiler mode.

-

Hyperparameter reference. Reference to supported hyperparameters.

-

SambaNova PyTorch operator support. Reference to supported PyTorch operators. Includes links to the API Reference.

Tips and tricks

-

Uncorrectable Error Replay (Beta). Learn how the new UE error replay feature works and how to use it.

-

Use Python virtual environments. Learn how to use the virtual environments that are included with SambaFlow example applications.

-

Use LayerNorm instead of BatchNorm. Learn how to convert a model from BatchNorm to LayerNorm.

-

Control what tokens are back-propagated on during training. Learn how to use token ids to customize which tokens a model learns to generate, and which tokens a model attends to, but does not learn to generate.

-

Use a supported or custom learning rate scheduler. Learn how to use supported and custom learning rate schedulers.

-

Best practices for hardware transition. If you’re migrating to SN30 hardware, the best practices help you understand changes to your models you might want to make.

Other materials

-

Data preparation scripts. We have a public GitHub repository

with two scripts for pretraining data creation,

pipeline.pyanddata_prep.py. -

SambaNova Runtime documentation. Information on logs, fault management, and other lower-level procedures.