Prerequisites

Before starting, ensure you have:- A SambaCloud account and API key

- A Hugging Face account

Setup

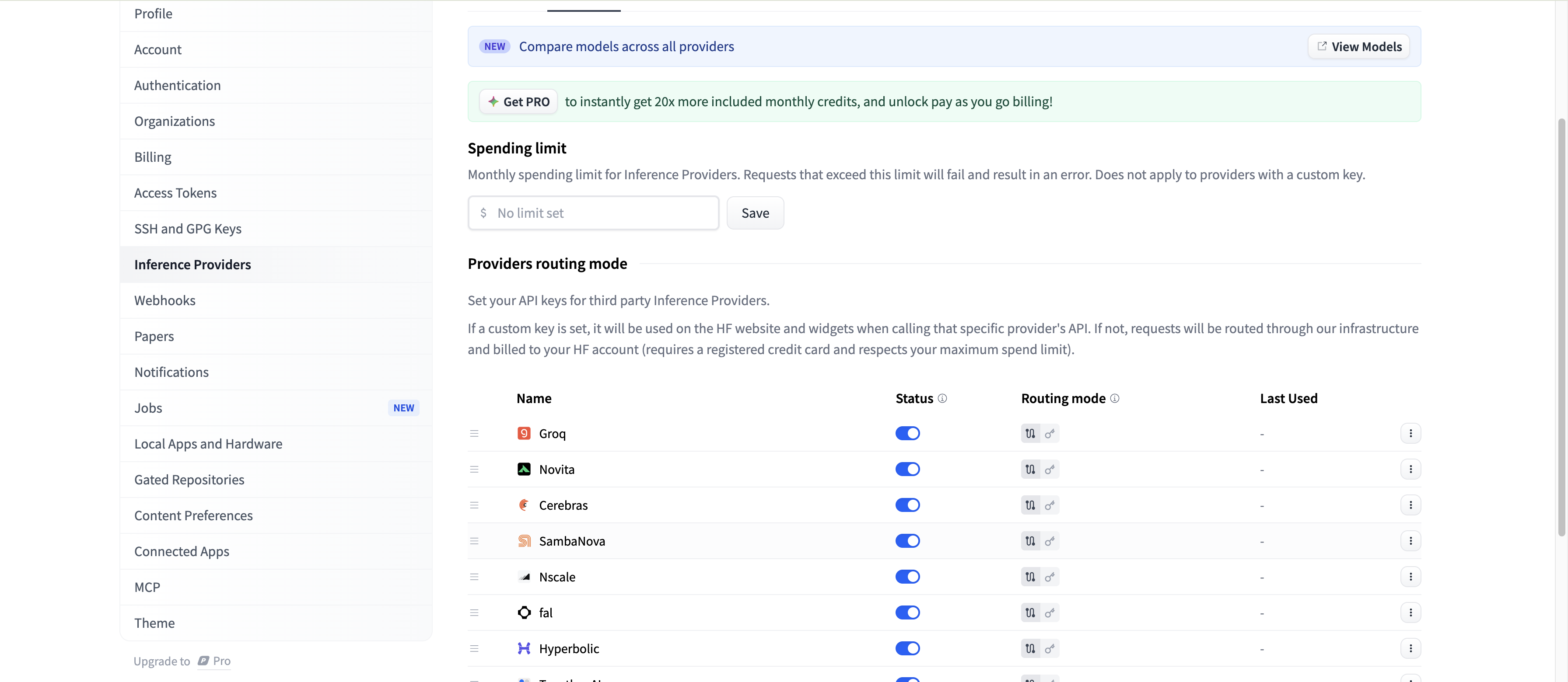

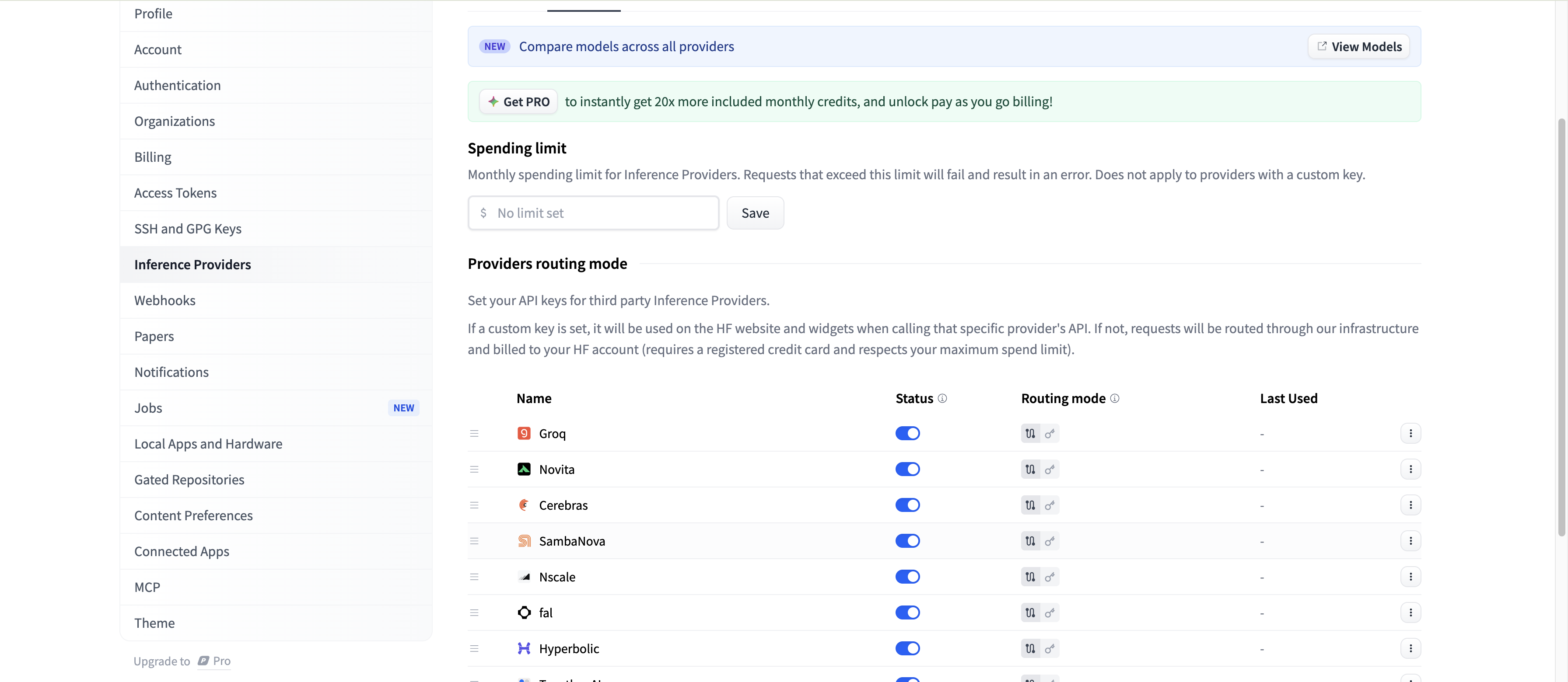

Enable SambaNova as an inference provider

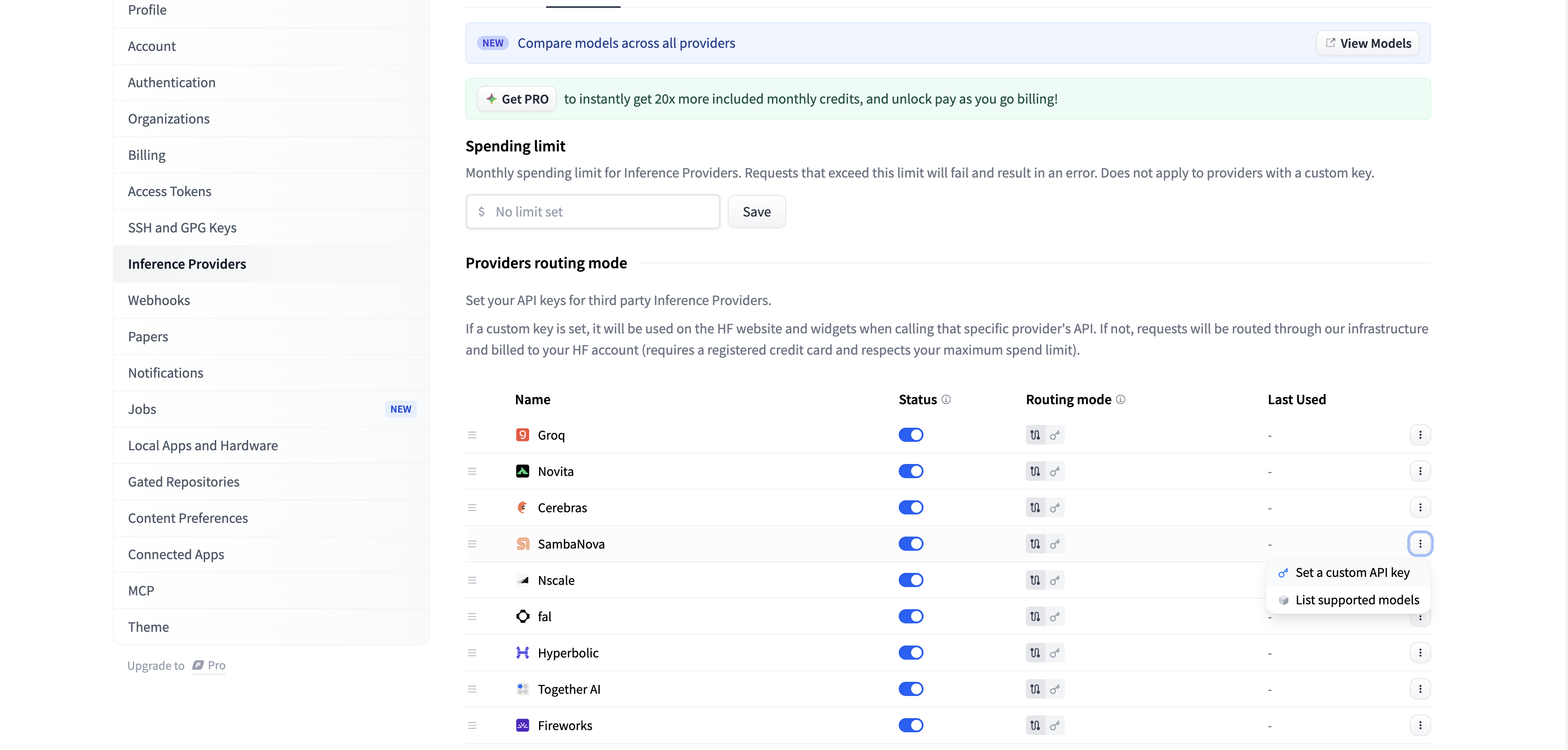

Go to your Hugging Face account settings, navigate to Inference Providers, and enable SambaNova.

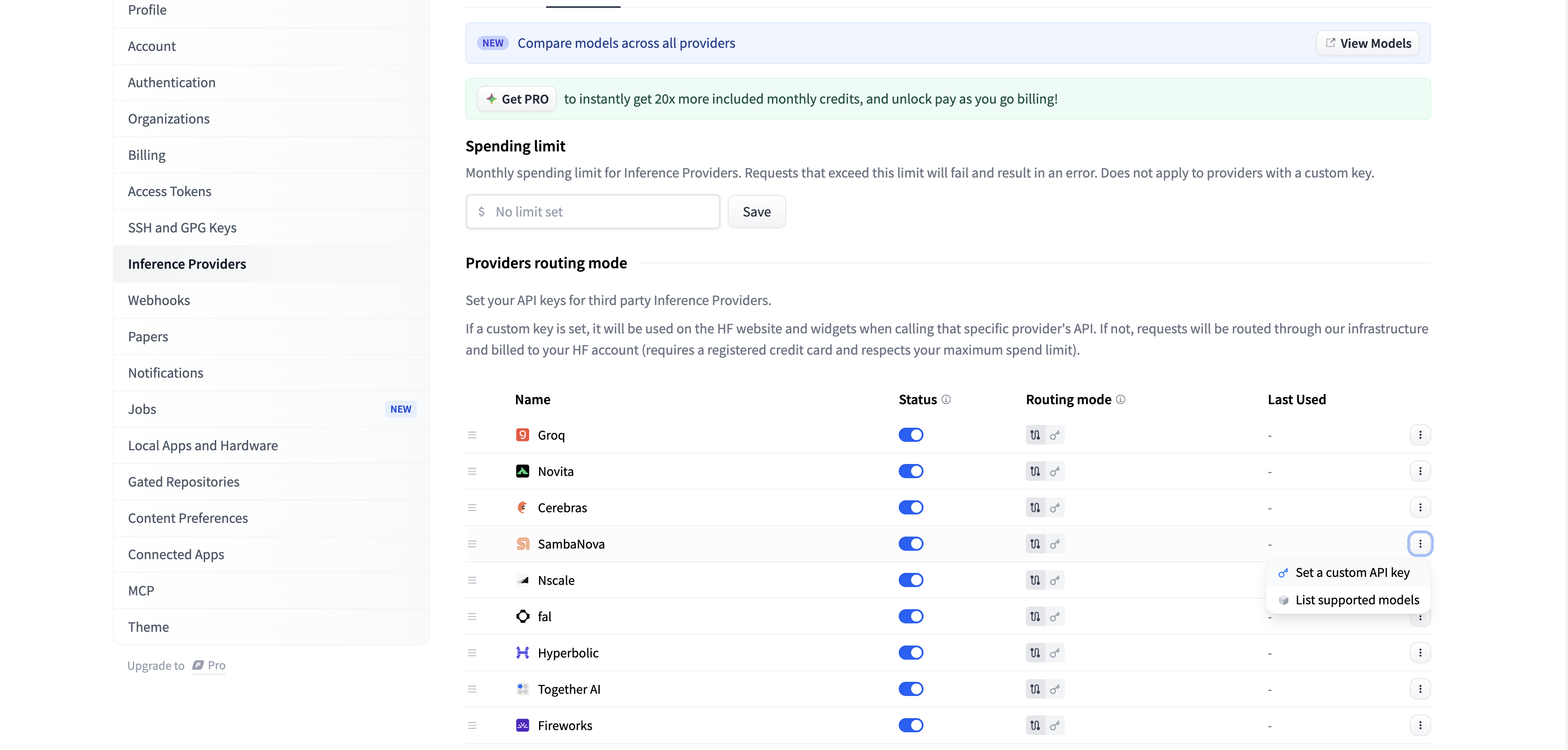

Add your SambaCloud API key

Enter your SambaCloud API key in the provider configuration.Get your API key from the SambaCloud portal.

Usage

There are two ways to use Inference APIs:- Custom key: Your requests are sent directly to SambaNova using your own API key. Usage is billed to your SambaNova account.

- Routed by HF: Your requests are routed through Hugging Face, so you don’t need a separate provider API key. Usage fees are billed to your Hugging Face account.

Using the Python SDK

The example below demonstrates how to run a model through SambaNova as the inference provider. You can authenticate with your SambaCloud API key. Make sure you havehuggingface_hub version 0.28.0 or newer installed.